Meta’s smart glasses are starting to look less like a novelty and more like a platform. The company has now opened up its Meta Ray-Ban Display glasses to third-party developers, a move that quietly shifts the product from a closed set of built-in features to something more expandable.

“Developers have been experimenting and building hands-free experiences on our AI glasses using camera, audio, and voice. Now, we’re excited to offer a way to present information visually, directly in the moment," the company said in a blog post.

Until now, the glasses could show messages, AI responses, and a handful of built-in tools. While useful, those features were still limited. The real question has always been what happens when outside developers get involved.

How Developers Can Build for the Glasses

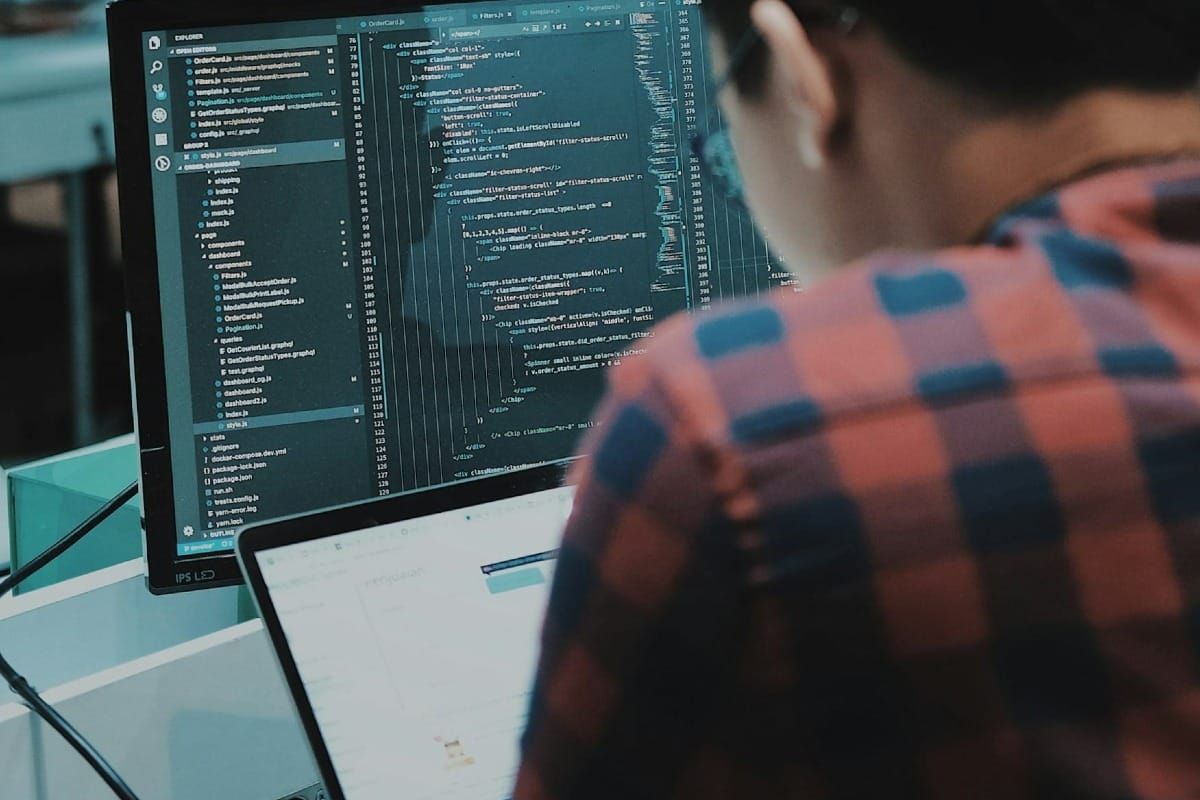

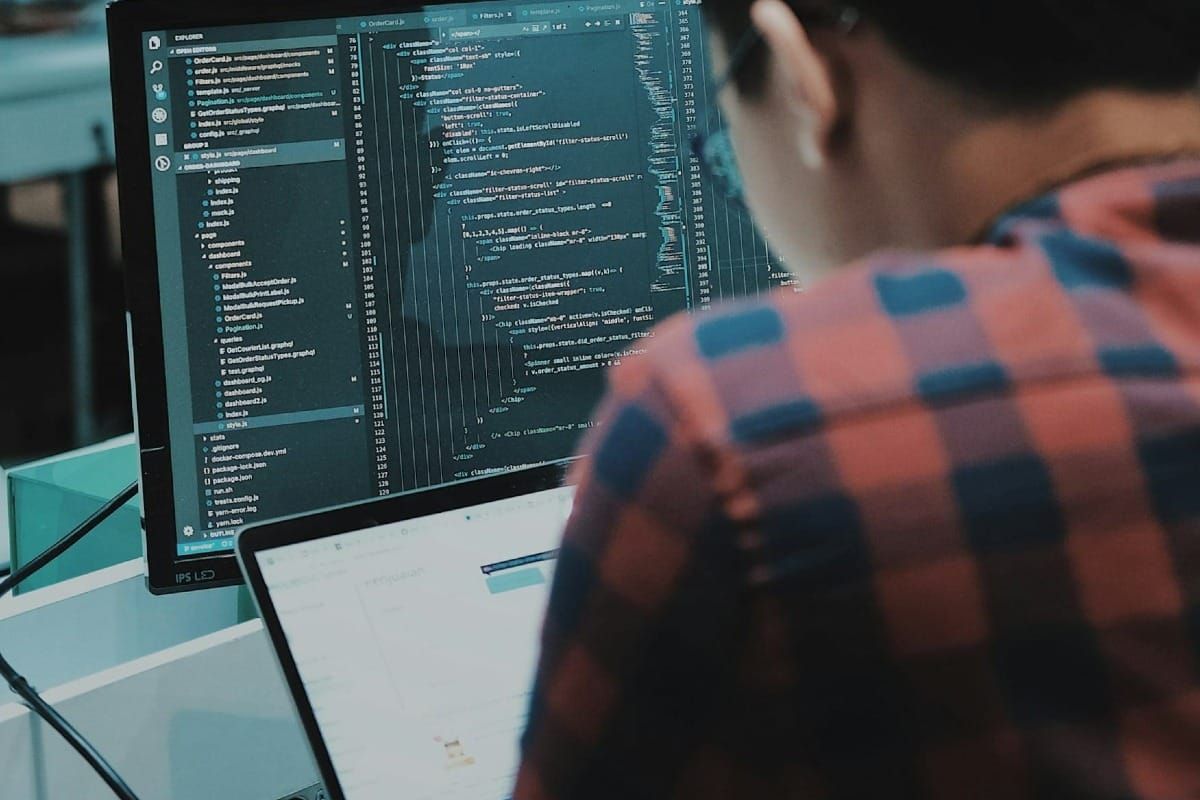

Now that door is open. Developers can build apps for the in-lens display in two main ways. One route is through Meta’s Wearables Device Access Toolkit, a native SDK for iOS and Android that lets existing apps extend into the glasses. That means developers can reuse familiar building blocks like text, images, buttons, video, and lists to push parts of their apps directly onto the display.

The second option is more open-ended: web apps. Built with standard HTML, CSS, and JavaScript, these can run like lightweight tools accessed through a URL instead of an app store download. It’s the kind of setup that makes it easier to experiment, whether it’s transit tools, cooking instructions, or quick utility apps.

On paper, this turns the glasses into something closer to a wearable interface layer rather than a standalone gadget.

For users, the shift is simple but significant. Instead of waiting for Meta to decide what features matter, developers can now push in real-time updates, live data, and small tools directly into your line of sight. Think sports scores while walking, grocery lists without pulling out your phone, or navigation prompts that sit quietly in your view as you move through a city.

Interaction is also changing. With Meta’s Neural Band, users can control these apps using subtle hand gestures, including experimental features like handwriting-style input for messaging across WhatsApp, Messenger, and Instagram.

Meta is also layering in new capabilities of its own. Live captions are rolling out for messaging apps, display recording lets users capture what they see alongside audio and on-screen content, and navigation support now stretches across the US and major European cities like London, Paris, and Rome.

None of this feels fully finished yet. There are no third-party apps available at launch, only the tools to build them. But that’s often where platform shifts begin, not with the apps people use today, but with the ecosystem forming around what could be built next.

Meta is betting that once developers start experimenting, the glasses will stop being just a display and start behaving more like a daily interface for small, constant tasks.