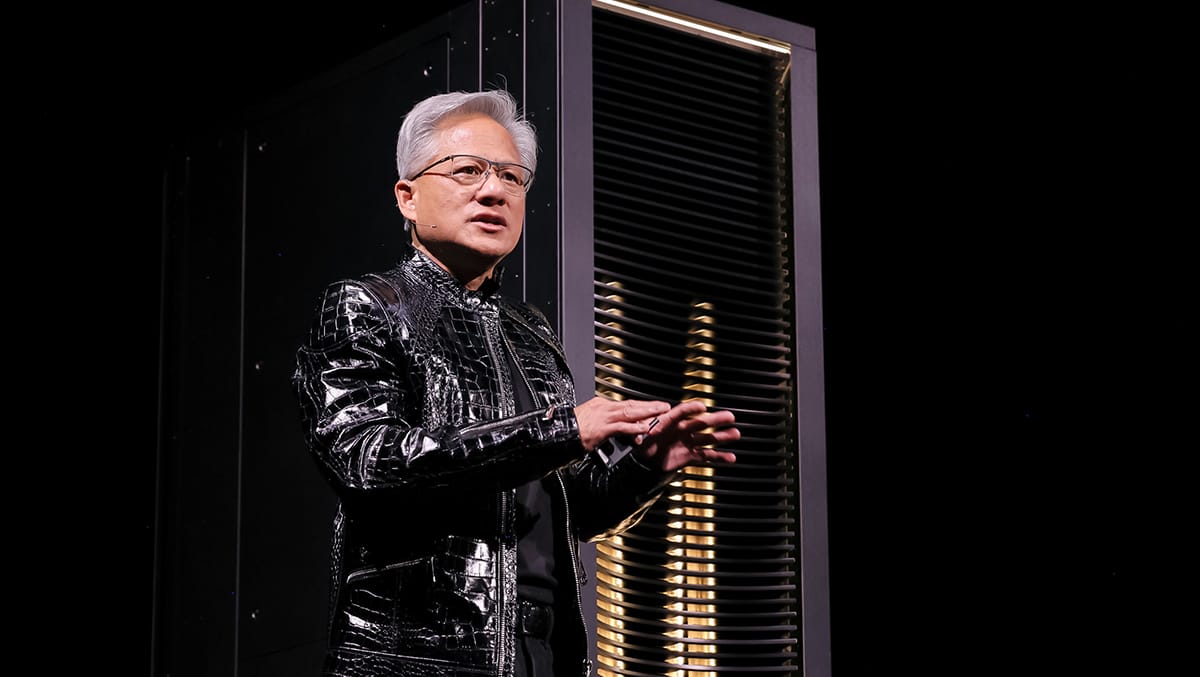

Jensen Huang stood on the GTC stage in San Jose on March 16 and doubled the AI infrastructure projections he made at the same conference one year ago. The NVIDIA CEO confirmed what the industry had been speculating about for months—his company acquired the Groq team and integrated their token accelerator technology into the product roadmap.

Here's everything Jensen Huang announced at the NVIDIA GTC 2026 keynote.

1. NVIDIA Sees $1 Trillion in AI Infrastructure Demand Through 2027

Huang stated that from where he stands today, the company sees "through 2027 at least $1 trillion" in high-confidence demand and purchase orders for its Blackwell, Rubin, and future platforms. This doubles the $500 billion projection he made at GTC 2025 one year ago.

"I know why you're not impressed because all of you had record years," Huang told the audience, referring to NVIDIA's supply chain partners, including 50-year-old, 70-year-old, and 150-year-old companies that all hit revenue peaks in 2025. He added that he's "certain computing demand will be much higher than that."

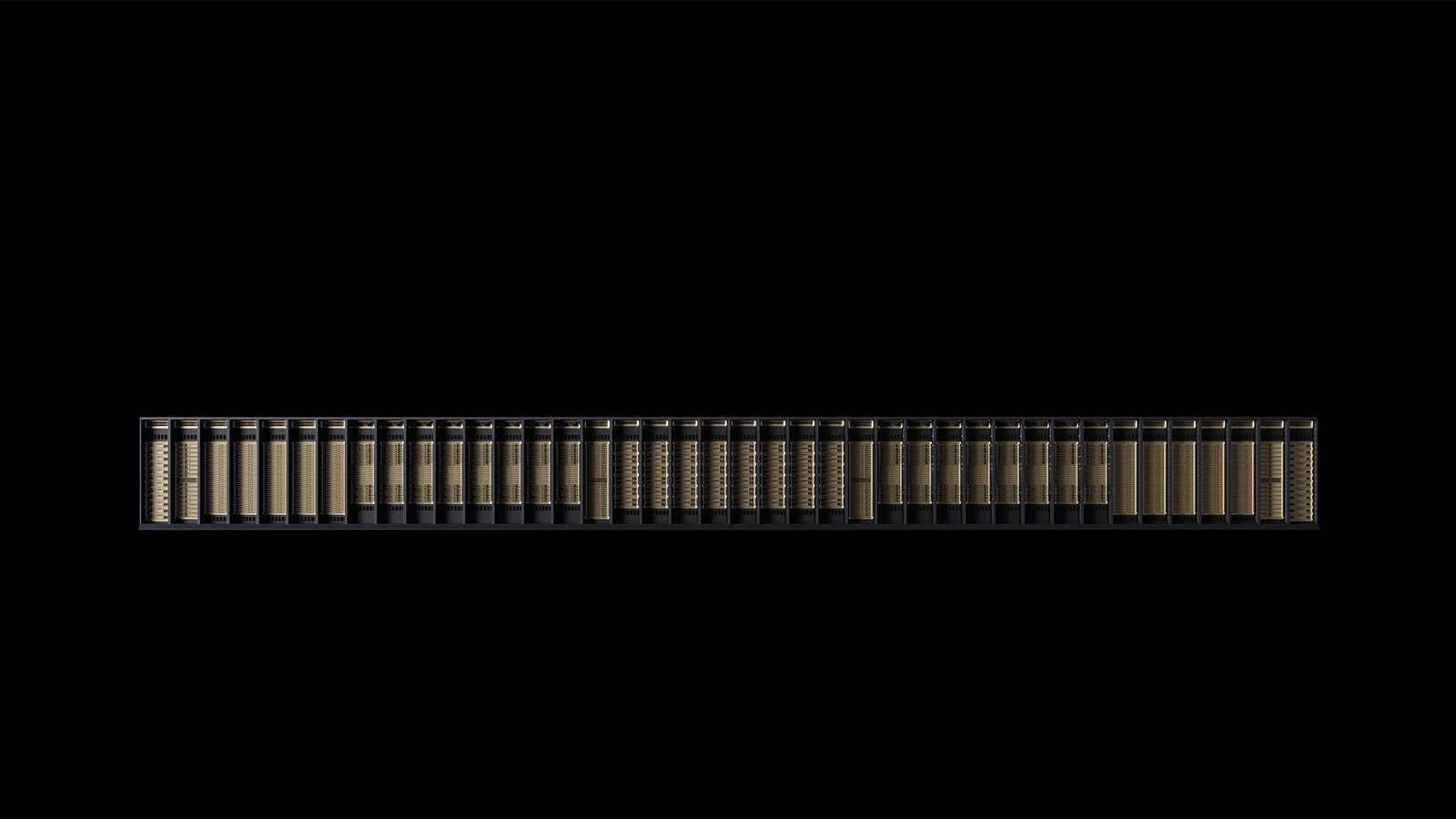

2. Vera Rubin Supercomputer Integrates Seven Chips Across Five Rack Systems

The flagship announcement was Vera Rubin, NVIDIA's next-generation AI infrastructure platform designed for agentic AI workloads. The system integrates seven different chips into five rack-scale computers that operate as one unified supercomputer.

The architecture includes the Vera Rubin GPU with NVLink 72 connecting 72 GPUs, the Vera CPU for orchestration, ConnectX-9 networking, BlueField-4 storage processors, and Spectrum-X Ethernet with co-packaged optics. The complete system delivers 3.6 exaflops of compute with 260 terabytes per second of all-to-all NVLink bandwidth.

Huang explained the design decisions. "Agents have to access memory. KV cache, structured data, unstructured data. It's gonna pound on the storage system really, really hard, which is the reason why we reinvented the storage system." The platform is 100% liquid cooled using 45-degree hot water, eliminating traditional data center cooling infrastructure. Microsoft Azure already has the first Vera Rubin rack operational.

3. NVIDIA Acquired Groq Team and Integrated LP30 Chip Into Product Line

Huang disclosed that NVIDIA "acquired the team that worked on the Groq chips and licensed the technology." The Groq LP30 chip is now in volume production at Samsung and ships in Q3 2026.

Each chip contains 500 megabytes of on-chip SRAM and functions as what Huang called "a deterministic data flow processor" with static compilation designed for ultra-low-latency token generation. NVIDIA's Dynamo software disaggregates inference workloads between Vera Rubin, which handles prefill and attention for high throughput, and Groq chips, which handle decode and token generation for low latency.

Huang recommended deployment strategies. "If most of your workload is high throughput, I would stick with just 100% Vera Rubin. If a lot of your workload wants to be coding and very high valued engineering token generation, I would add Groq to it. I would add Groq to maybe 25% of my total data center." The integration delivers 35 times more throughput per megawatt at premium pricing tiers.

4. Performance Jumped 50x in Two Years Against 1.5x Moore's Law Prediction

Independent analysis from SemiAnalysis validated NVIDIA's performance claims through comprehensive benchmarking. Hopper H200 to Grace Blackwell NVLink 72 delivered 35 times better performance per watt, and SemiAnalysis measured the actual improvement at 50 times.

Huang acknowledged the discrepancy. "Nobody would have expected 35 times higher. I said last year at this time that NVIDIA's Grace Blackwell NVLink 72 was 35 times perf per watt. Nobody believed me. Then SemiAnalysis came out and Dylan Patel had a quote. He accused me of sandbagging. He says, 'Jensen sandbagged. It's actually 50 times.' He's not wrong."

Moore's Law would have delivered roughly 1.5 times more performance over the same period through transistor scaling. Token generation speed in a one-gigawatt factory increased from 2 million to 700 million tokens, a 350-times improvement in two years.

5. Computing Demand Increased One Million Times as Inference Inflection Arrived

Huang declared that "computing demand has increased by 1 million times in the last two years" driven by three specific AI breakthroughs. ChatGPT in late 2022 started the generative era. OpenAI's o1 and o3 reasoning models made AI trustworthy through reflection and planning. Anthropic's Claude Code became "the first agentic model" that can read files, code, compile, test, and iterate autonomously.

Huang explained the computational shift. "For the first time, you don't ask the AI what, where, when, how. You ask it create, do, build. AI now has to think. In order to think, it has to inference. AI has to read, it has to inference. It has to reason, it has to inference."

He noted that Claude Code "has revolutionized software engineering" and that "100% of NVIDIA is using a combination of, or oftentimes all three of them, Claude Code, Codex, and Cursor." The combination of 10,000x increase in compute per task and 100x increase in usage explains the million-times total demand growth.

6. Token Pricing Will Segment Into Five Tiers From Free to $150 Per Million

Huang presented a framework for AI infrastructure economics showing how data centers will monetize different performance levels. Token pricing segments into free tier with high throughput and lower speed, $3 per million tokens for medium tier, $6 per million for higher tier, $45 per million for premium, and $150 per million tokens for ultra-premium.

He gave a research use case example. "Suppose you were to use 50 million tokens per day as a researcher at $150 per million tokens. As it turns out, as a research team, that's not even a thing."

The architecture deployed determines which tiers can be served. In a one-gigawatt factory distributing 25% of power across each tier, Blackwell generates five times more revenue than Hopper, Vera Rubin generates five times more revenue than Blackwell, and Vera Rubin with Groq delivers another 35 times improvement at the premium tier.

7. OpenClaw Gets Enterprise Security Through NemoClaw Reference Design

Huang called OpenClaw "the most popular open source project in the history of humanity" and said it "exceeded what Linux did in 30 years" in just weeks. He explained its function as having "open sourced essentially the operating system of agentic computers. It is no different than how Windows made it possible for us to create personal computers. Now, OpenClaw has made it possible for us to create personal agents."

NVIDIA created NemoClaw, an enterprise-secure reference design that integrates OpenShell technology with network guardrails and privacy routers. Huang outlined the security challenge. "Agentic systems in the corporate network can have access to sensitive information, it can execute code, and it can communicate externally. Obviously, this can't possibly be allowed."

NemoClaw addresses this while remaining compatible with existing enterprise policy engines. He stated that every company needs "an OpenClaw strategy. Just as we all needed to have a Linux strategy, we all needed to have a HTTP, HTML strategy, which started the internet... Every company in the world today needs to have an OpenClaw strategy."

8. BYD, Hyundai, Nissan, Geely Join Platform for 18 Million Robotaxis Annually

NVIDIA announced four new automaker partnerships for its autonomous vehicle platform adding BYD, Hyundai, Nissan, and Geely. Combined with existing partners Mercedes, Toyota, and GM, these manufacturers produce 18 million vehicles annually. Huang also announced a multi-city deployment partnership with Uber to integrate robotaxi-ready vehicles into their network.

He demonstrated the Alpamayo reasoning system with real-time narration clips where the AI explained its decisions. "I'm changing lanes to the right to follow my route" and "There's a double-parked vehicle in my lane. I'm going around it." When prompted "Hey, Mercedes-Benz, can we speed up?" the system responded "Sure, I'll speed up."

Huang called Alpamayo "the world's first thinking and reasoning autonomous vehicle AI" and described the development as "the ChatGPT moment of self-driving cars has arrived."

9. Rubin Ultra Connects 144 GPUs While Feynman Scales to 576 GPUs

The product roadmap extends through two more generations beyond Vera Rubin. Rubin Ultra introduces the Kyber rack system where compute nodes insert vertically into a midplane rather than sliding horizontally. This architecture connects 144 GPUs in one NVLink domain using backplane-mounted NVLink switches instead of copper cables.

The Oberon variant uses copper plus optical scale-up to reach NVLink 576. After Rubin Ultra comes Feynman, which includes a new GPU, the LP40 accelerator built as Groq's next generation incorporating NVIDIA's NVFP4 computing structure, Rosa CPU, BlueField-5, and ConnectX-10 networking.

Huang addressed the copper versus optical question. "A lot of people have been asking, 'Jensen, is copper going to still be important?' The answer is yes. 'Jensen, are you going to scale up optical?' Yes. 'Are you gonna scale out optical?' Yes." Every annual architecture will support both approaches.

10. Supply Chain Manufactures Multi-Gigawatts of AI Infrastructure Per Month

Huang stated current manufacturing capacity as "thousands a week of these systems, essentially multi-gigawatts of AI factories per month inside our supply chain." Vera Rubin is in full production with early sampling "going incredibly well." Grace Blackwell GB300 racks continue in full production alongside the Vera Rubin ramp.

The Groq LP30 chip is in volume production at Samsung. Spectrum-X co-packaged optics switches are in full production. Huang thanked Samsung for manufacturing the Groq LP30 chip and said "they're cranking as hard as they can."

11. IBM, Dell, Google Deliver 80% Cost Reductions Through Data Acceleration

NVIDIA announced integrations into enterprise data platforms. IBM watsonx.data now uses cuDF, NVIDIA's structured data library, with results demonstrated through Nestlé's global supply chain where data mart refreshes that ran a few times daily on CPUs now run five times faster at 83% lower cost on GPUs, processing every supply, order, and delivery event across 185 countries.

Dell's AI Data Platform integrates both cuDF and cuVS, the vector store library, with NTT DATA deployments showing speedups. Google Cloud's BigQuery acceleration helped Snapchat reduce computing costs by nearly 80%.

Huang explained the change happening in enterprise computing. "We used to have humans using the storage systems. We used to have humans using SQL. Now we're gonna have AIs using these storage systems."

12. Six Open Model Families Lead Leaderboards Across All AI Domains

NVIDIA released positions across major AI categories through six open-source model families. Nemotron-3 ranks number one on leaderboards for reasoning, voice, and research applications. Cosmos-2 provides world foundation models for physical AI simulation. Groot generation two handles general-purpose humanoid robotics.

Alpamayo powers autonomous vehicle reasoning. BioNeMo covers digital biology, chemistry, and molecular design. Earth-2 handles weather and climate forecasting. The Nemotron Coalition launched with partners including Black Forest Labs, Cursor, LangChain, Mistral, Perplexity, Reflection, Sarvam, and Thinking Machines Lab, which is Mira Murati's new company.

Huang explained the commitment behind open models. "Our models are valuable to all of you because number one, it's on the top of the leaderboard. Most importantly, it's because we are not gonna give up working on it. We're gonna keep on working on it every single day."

13. Every SaaS Company Will Become AaaS as Engineers Get Token Budgets

Huang outlined the enterprise transformation. "Every single IT company, every single company, every SaaS company will become a AaaS company, an agentic as a service company." He described the shift in recruiting practices across Silicon Valley.

"It is now one of the recruiting tools in Silicon Valley. How many tokens comes along with my job? The reason for that is very clear, because every engineer that has access to tokens will be more productive."

His projection for NVIDIA's workforce laid out the economics. "Every single engineer in our company will need an annual token budget. They're gonna make a few hundred thousand dollars a year their base pay. I'm gonna give them probably half of that on top of it as tokens so that they could be amplified 10x."

14. Disney Demonstrates Olaf Robot Trained Entirely in Omniverse Simulation

NVIDIA showcased 110 robots across autonomous vehicles, industrial systems, and humanoids, with demonstrations of Isaac Lab for training, Newton for physics simulation, Cosmos for world models, and Groot for robotics foundation models. Disney Research brought Olaf from Frozen as a physical robot trained entirely in NVIDIA Omniverse using the Newton physics solver.

The robot walked onstage, held a conversation with Huang, and demonstrated real-time physical adaptation. Huang explained the technology. "It was because of physics using this Newton solver that runs on top of NVIDIA Warp that we jointly developed with Disney and with DeepMind that made it possible for you to be able to adapt to the physical world."

Partners announced included ABB, Universal Robots, KUKA, Caterpillar, T-Mobile for Aerial AI RAN base stations, Peritas AI, Skilled AI, Humanoid, Hexagon Robotics, Foxconn, and Noble Machines.

15. Vera CPU Already a Multi-Billion Dollar Standalone Business

NVIDIA's data center CPU exceeded internal projections after launching as a component in integrated systems. The Vera CPU uses LPDDR5 memory, unique among data center processors, and delivers what Huang called "incredible single-threaded performance" and "performance per watt that is unrivaled."

The chip was designed for agentic AI tool use and data processing workloads. Huang stated the business outcome. "We never thought we would be selling CPUs standalone. We are selling a lot of CPU standalone. This is already for sure going to be a multi-billion dollar business for us."

16. Confidential Computing Protects Models Even From Cloud Operators

NVIDIA GPUs became the first to support confidential computing where "even the operator cannot see your data, even the operator cannot touch or see your models," according to Huang. This enables protected deployment of OpenAI and Anthropic models across different cloud regions and supports sovereign AI development for countries building their own infrastructure.

Huang highlighted the Palantir and Dell partnership. "The three of our companies have made it possible to stand up a brand-new type of AI platform, the Palantir Ontology platform. We could stand up these platforms in any country, in any air-gapped region, completely on-prem, completely on-site, completely in the field."

17. CUDA Celebrates 20 Years With Hundreds of Millions of Installed GPUs

Huang opened the keynote by celebrating CUDA's 20-year milestone and explained the installed base. "We've been dedicated to this architecture for 20 years... It has taken us 20 years to now have built up hundreds of millions of GPUs and computing systems around the world that run CUDA."

He described the flywheel effect where installed base attracts developers who create new algorithms that achieve breakthroughs like deep learning, which leads to new markets and larger ecosystems.

Continuous software updates extend useful life beyond hardware expectations. "Ampere, that we shipped some six years ago, the pricing of Ampere in the cloud is going up" because ongoing optimizations make six-year-old hardware more valuable today than when it shipped.

18. DSX Platform Provides Digital Twin for Designing AI Factories

NVIDIA Omniverse DSX launched as a platform for AI factory design and operation. The system simulates mechanical, thermal, electrical, and network systems while integrating with grid power for dynamic adjustment. Max-Q technology optimizes across power and cooling to eliminate wasted capacity.

Partners include PTC Windchill, Dassault Systèmes, Jacobs, Siemens, Cadence, ETAP, Procore, Phaidra, and Emerald. Huang stated efficiency gains possible through proper design. "There's no question in my mind there's a factor of two in here, and a factor of two at the scale we're talking about is gigantic."