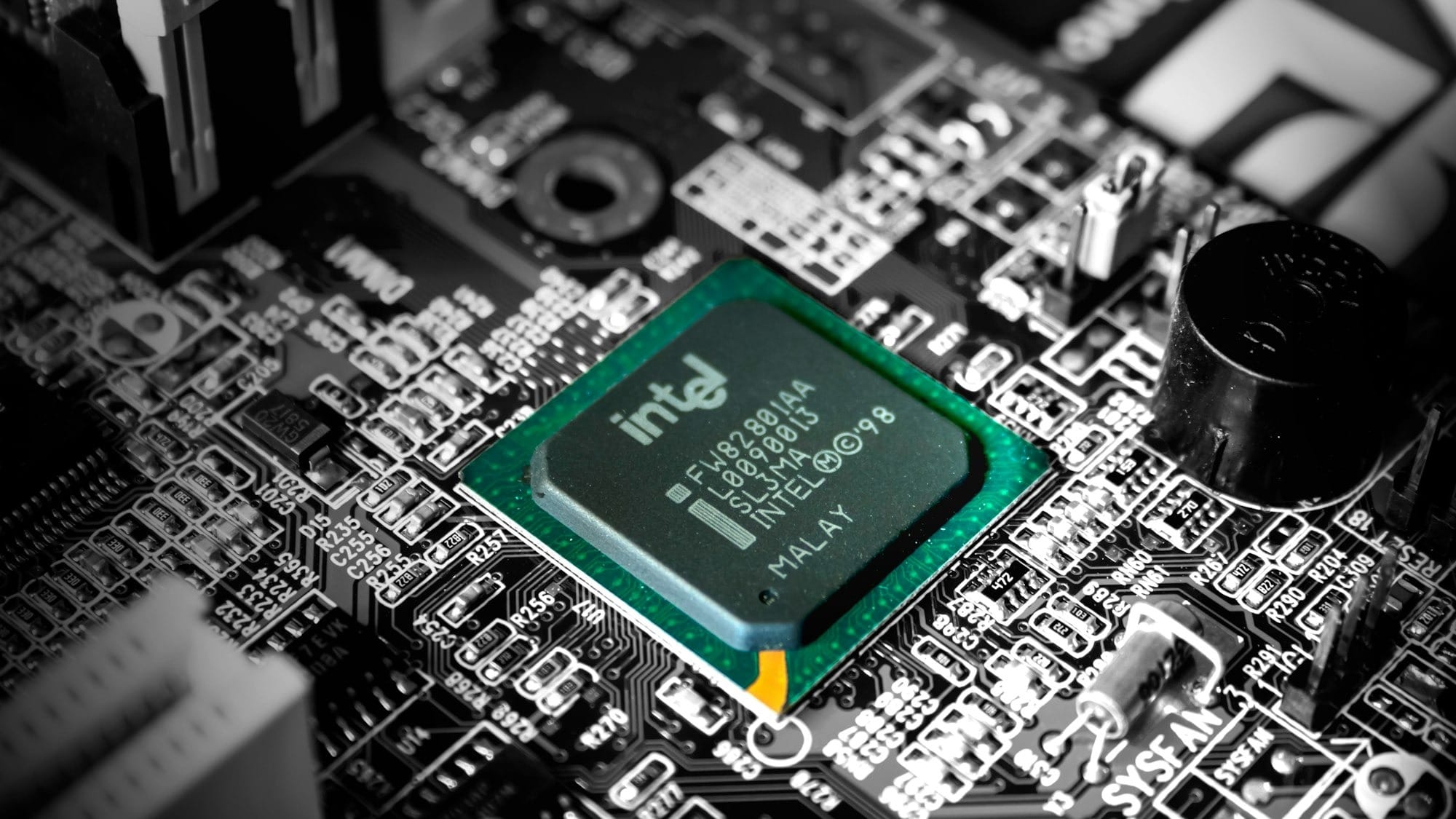

Intel and Google have announced a multiyear collaboration focused on the often-overlooked side of artificial intelligence infrastructure: the systems that make AI actually run. While much of the AI conversation has centred on GPUs and accelerators, this partnership shifts attention back to CPUs and infrastructure chips, with both companies betting that balanced systems, not just raw accelerator power, will define the next phase of AI scaling.

“As AI adoption accelerates, infrastructure is becoming more complex and heterogeneous, driving increased reliance on CPUs for orchestration, data processing and system-level performance,” Intel said in a blog post announcing the partnership.

“Through this collaboration, Intel and Google will align across multiple generations of Intel® Xeon® processors to improve performance, energy efficiency and total cost of ownership across Google’s global infrastructure.”

At the heart of the deal is Google’s commitment to deploy multiple generations of Intel’s Xeon processors across its global cloud infrastructure. These chips will power a range of workloads, from coordinating large AI training jobs to handling latency-sensitive inference and general cloud computing. Google Cloud already uses Intel’s latest Xeon 6 processors in its C4 and N4 instances, and this agreement ensures tighter alignment with Intel’s CPU roadmap for years to come.

But CPUs are only part of the story. The two companies are also expanding their work on custom infrastructure processing units, or IPUs. These are programmable chips designed to offload networking, storage, security, and virtualisation tasks from the main CPU.

By moving these “overhead” responsibilities to IPUs, Google can free up more effective compute capacity for AI and cloud workloads without adding complexity to its systems. In hyperscale data centres, that efficiency can translate directly into lower costs and better performance predictability.

“AI is reshaping how infrastructure is built and scaled,” said Lip-Bu Tan, CEO of Intel. “Scaling AI requires more than accelerators – it requires balanced systems. CPUs and IPUs are central to delivering the performance, efficiency and flexibility modern AI workloads demand.”

“CPUs and infrastructure acceleration remain a cornerstone of AI systems—from training orchestration to inference and deployment,” said Amin Vahdat, SVP & Chief Technologist, AI Infrastructure, Google. “Intel has been a trusted partner for nearly two decades, and their Xeon roadmap gives us confidence that we can continue to meet the growing performance and efficiency demands of our workloads.”

As AI workloads become more agentic and system-heavy, there’s a growing recognition that GPUs alone aren’t enough. Even Nvidia’s own infrastructure leadership has pointed out that CPUs are increasingly becoming the bottleneck in AI systems. Intel and Google appear to be leaning into that shift, arguing that the real AI race is now about how well different components of the system work together.

The announcement also had an immediate market effect. Intel shares jumped between 3% and 5% following the news, continuing a rally that has seen the stock surge sharply over the past year. Alphabet’s shares ticked up more modestly.