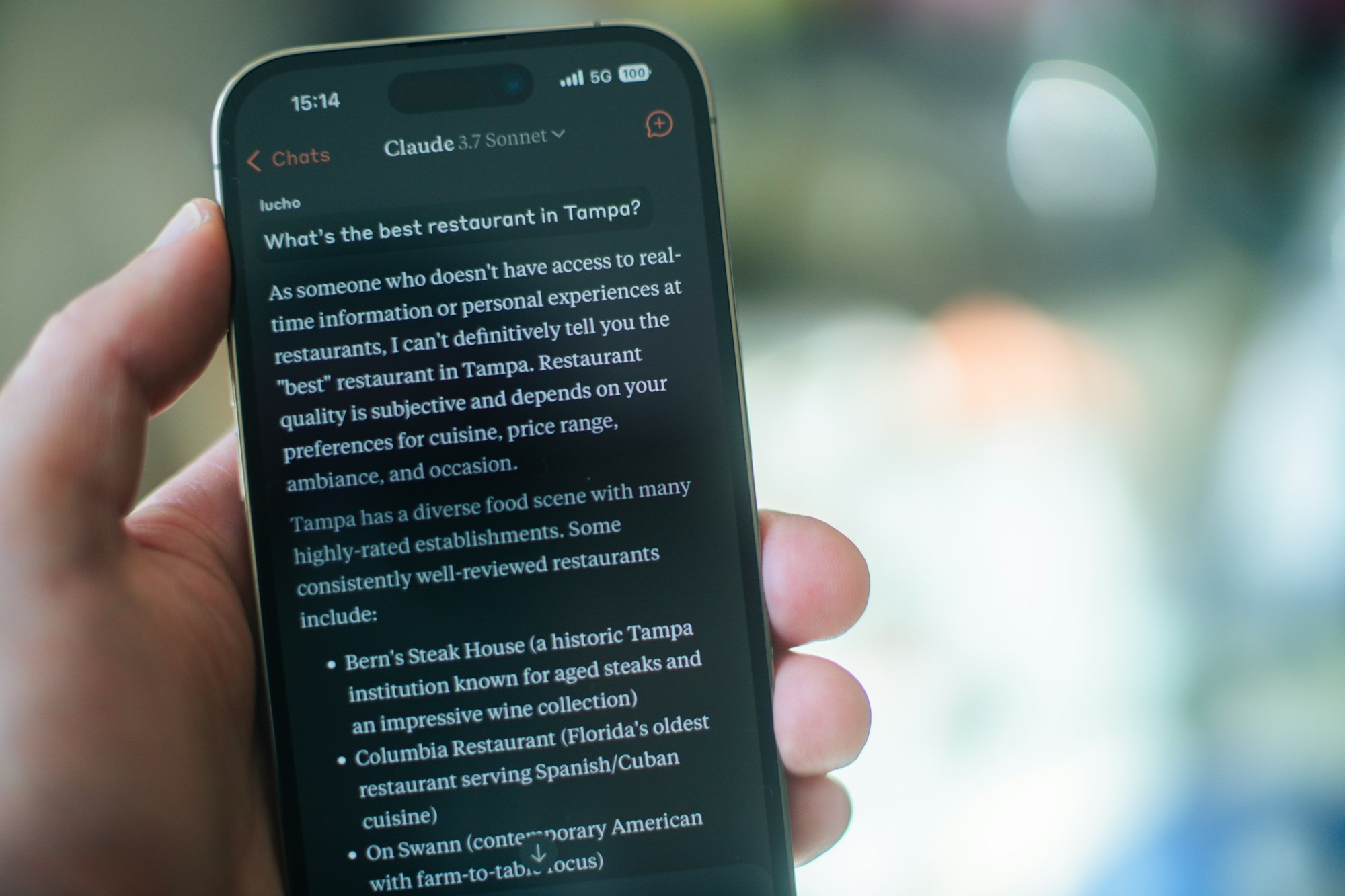

On Monday, AI startup Anthropic released a blog post accusing three Chinese AI companies, DeepSeek, Moonshot, and MiniMax, of improperly using Anthropic’s chatbot, Claude, to train their own models.

In the post, Anthropic said, "We have identified industrial-scale campaigns by three AI laboratories—DeepSeek, Moonshot, and MiniMax—to illicitly extract Claude’s capabilities to improve their own models. These labs generated over 16 million exchanges with Claude through approximately 24,000 fraudulent accounts, in violation of our terms of service and regional access restrictions.”

Anthropic said the companies used a method called “distillation.” Distillation is simply a technique of training a smaller model based off the data of a larger model.

The accusation echoes earlier claims by OpenAI, which also alleged that Chinese firms used its systems to accelerate their own models. In a memo to U.S. lawmakers, OpenAI described the incident as attempts to “free-ride” on American AI advances.

This issue has transformed from just a tech issue into a geopolitical contest. The U.S. has tightened export controls on advanced chips to slow China’s progress. Anthropic argues that large-scale distillation undermines those controls by allowing labs to “close the competitive advantage” without building their models from scratch.

The Chinese companies named have not publicly responded to Anthropic’s claims.

Anthropic itself has faced lawsuits over how it sourced training data. In 2024, it agreed to a $1.5 billion settlement with authors and publishers over copyrighted books. OpenAI is fighting its own legal battle with The New York Times over alleged use of news articles in training. The companies have denied any wrongdoing.

Anthropic says it is strengthening detection systems and sharing intelligence with partners. It also calls for coordinated action among industry and policymakers.