There’s a strange irony happening in tech right now. The same companies training AI models on enormous amounts of user data are now racing to convince people their AI is “private.”

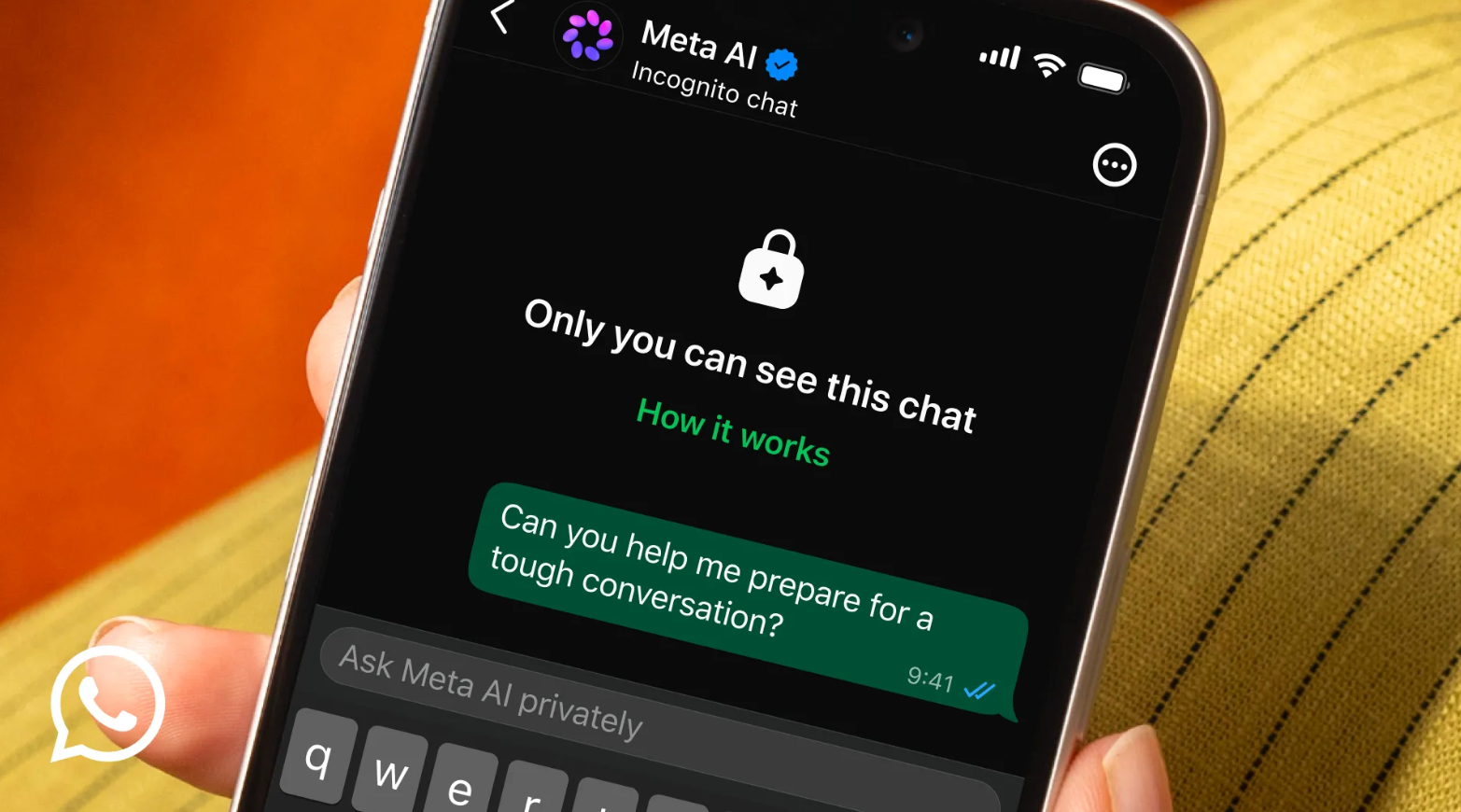

Meta is the latest to join that race. The company, which is already dealing with lawsuits, AI controversies, and growing scrutiny over how it handles user data, has announced a new feature called “Incognito Chat” for WhatsApp and Meta AI. The idea is simple: you can now have conversations with Meta AI that disappear once you close the app, and according to Meta, “no one can read your conversation, not even us.”

The feature works like a temporary private room for AI chats. Once enabled, your messages won’t be stored, the AI won’t remember previous conversations, and the session resets entirely after you leave. Meta says the chats are processed inside a secure environment inaccessible even to the company itself.

“Ten years ago we brought the world end-to-end encryption and now we are extending this privacy to chats with Meta AI,” the company wrote in a blog post.

Meta CEO Mark Zuckerberg described it as “the first major AI product where there is no log of your conversations stored on servers.”

And honestly, the timing makes sense. More people are beginning to use AI for deeply personal things: relationship advice, financial stress, career uncertainty, health fears, and emotional support. AI chatbots are quietly becoming therapists, search engines, and sounding boards all at once.

But that same privacy promise is also where the discomfort starts creeping in.

Because disappearing AI chats raise a difficult question: what happens when conversations involve danger?

OpenAI’s ChatGPT, Google’s Gemini, and Anthropic’s Claude all offer temporary or private chat modes, but they work differently. In most of these tools, chats are still stored for a limited period, often ranging from days to weeks, mainly for safety review, abuse monitoring, or system improvement. For Meta, the Incognito Chat, goes further by removing stored logs entirely from the start.

Reports from Mashable noted that regular Meta AI chats can sometimes trigger reviews if users discuss suicide or violence. With Incognito Chat, there would reportedly be no stored record of those conversations at all. Meta says its systems can still refuse harmful prompts and temporarily block dangerous behavior, but critics argue that completely private AI conversations create blind spots.

That concern becomes heavier when you look at the broader AI industry. OpenAI and Google are already facing lawsuits and investigations tied to allegations around harmful chatbot interactions. Meta itself has faced criticism before over AI chatbots and safety moderation.

And then there’s the trust problem.

This is still the same Meta that has faced allegations over data scraping, AI training controversies, and platform safety issues. So when the company says your AI conversations are completely invisible, many users will probably pause before taking that at face value.