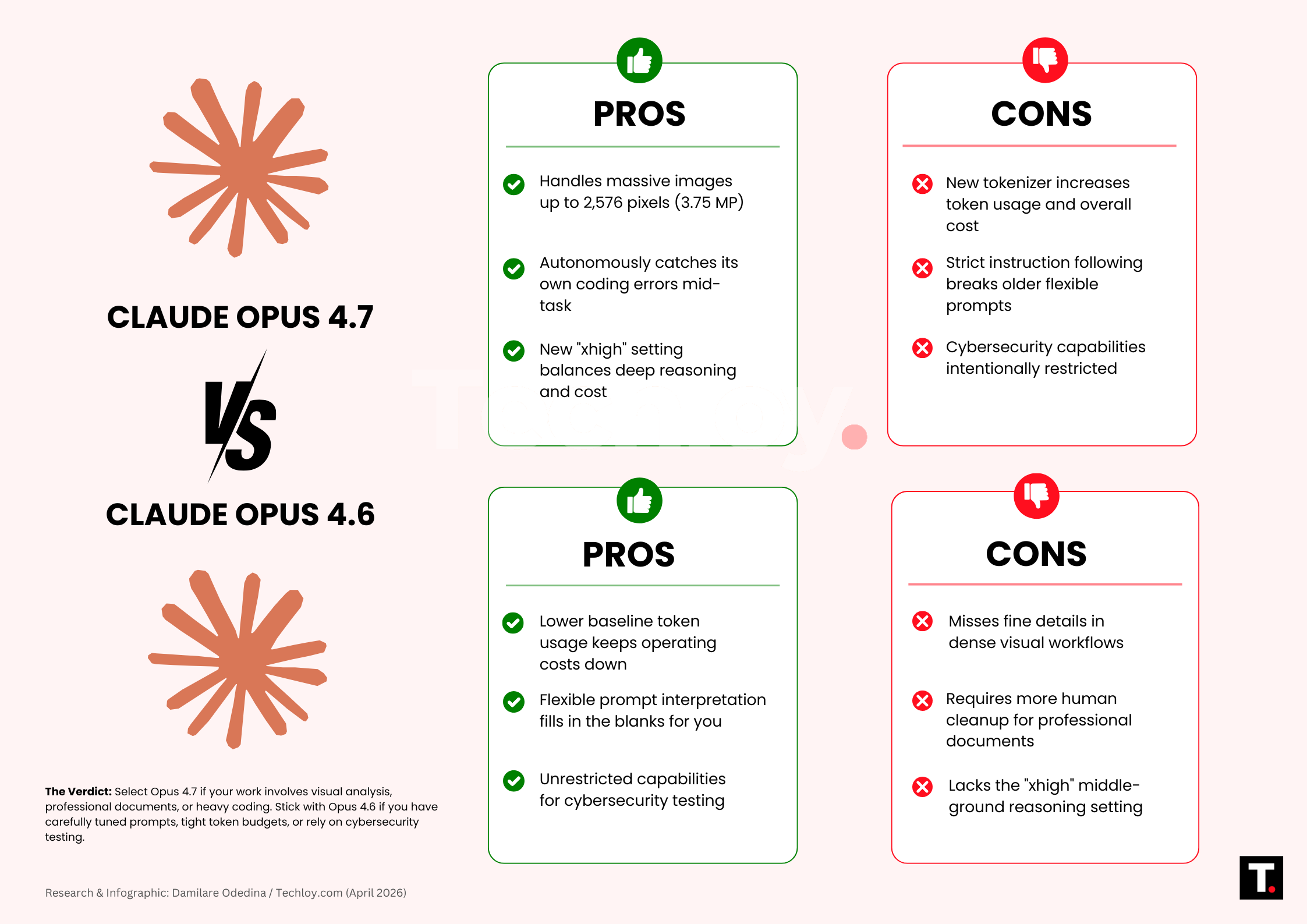

About ten weeks after Anthropic shipped Opus 4.6 with its 1M token context window and heavy coding abilities, they dropped Opus 4.7 on April 16. This isn't just a minor update. It targets specific weaknesses, expands what you can actually hand off to AI, and makes a few clear tradeoffs.

Digging through the release notes, Opus 4.7 is better at certain things, costs a bit more to run, and requires extra work if your setup is already tuned perfectly. Let's break down exactly what changed so you can decide if the switch from Opus 4.6 makes sense for your work.

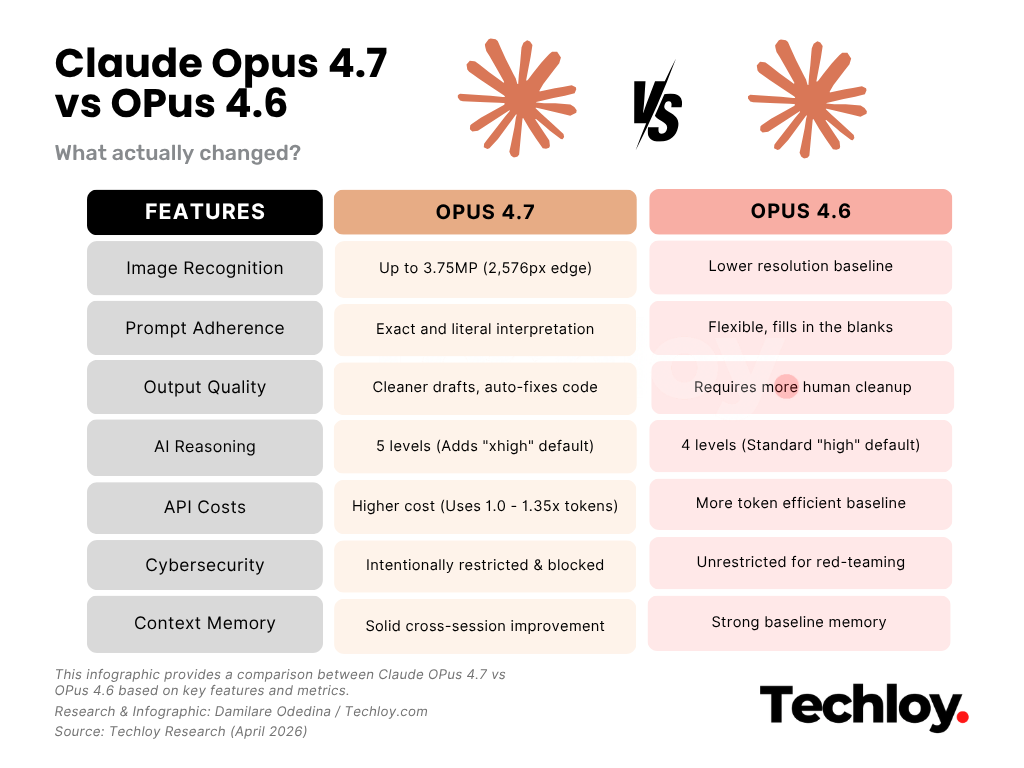

/1. Image Recognition and Vision Capabilities

This is the biggest single change in 4.7. The model now accepts images up to 2,576 pixels on the long edge, which is roughly 3.75 megapixels. That is over three times the resolution any prior Claude model handled.

For visual workflows, the gap is impossible to ignore. Teams reading detailed screenshots, extracting data from technical diagrams, or looking at complex UI mockups will see 4.7 catch details 4.6 missed. One early tester, XBOW, went from 54.5% to 98.5% on their visual benchmark after switching. That is a completely different tier of performance.

Just remember that higher resolution images eat up more tokens. If you send images but do not need every single pixel analyzed, making them smaller first will keep your bill down.

/2. Prompt Adherence and Instruction Following

Opus 4.7 follows instructions much more literally than 4.6. Where 4.6 would often fill in the blanks or interpret vague directions loosely, 4.7 takes you exactly at your word.

If you write strict, specific rules, you will love this. But if you relied on 4.6 figuring things out for you, you are going to hit some walls. Anthropic even notes in their release that old prompts might give you weird results now.

Basically, do not assume your current prompts will work the same way. Set aside time to rewrite them.

/3. Output Quality for Professional Deliverables

Anthropic says 4.7 produces better professional documents, and that shows up clearly in everyday work. Finance teams testing the model saw it improve from 0.767 to 0.813 on a General Finance module. Harvey (a specialized AI platform for legal professionals) recorded a 90.9% accuracy score for high-effort legal tasks. The output just requires a lot less human editing.

For coders, the gains look different. Cursor recorded an increase from 58% to 70% internally. Multiple partners saw the model catch its own errors while working, rather than waiting for human feedback. That is a big deal if you run long autonomous loops.

/4. AI Reasoning and Task Effort Levels

Opus 4.7 adds a fifth effort level called xhigh, sitting right between high and max. Anthropic set this as the new default in Claude Code, and they suggest starting there for coding.

The reason is simple. 4.7 thinks deeper at higher effort settings, especially late into long tasks. That extra thinking gets you better answers on hard problems, but it also burns more tokens. The xhigh setting gives you a middle ground. If high was too fast and max cost too much, this fixes that problem.

/5. API Costs and Token Efficiency

The base price is the same for both models- $5 per million input tokens and $25 per million output tokens. But 4.7 will likely cost you more to run.

The new tokenizer processes text differently, mapping the same input to about 1.0 to 1.35 times more tokens depending on the content. Add the extra tokens from the deeper reasoning settings, and your real-world bill will probably go up. Anthropic's own tests show decent token usage for coding, but they heavily advise testing your own workload first. You can control costs by turning down the effort setting or asking for shorter answers, but the tokenizer change itself is locked in.

Test this on actual traffic before you roll it out to your whole team.

/6. Cybersecurity Safeguards

This is a deliberate step backward from 4.6, and Anthropic is completely open about it. Because of their new security strategy, 4.7's cyber capabilities are restricted. The model automatically blocks requests it sees as high-risk cybersecurity tasks.

Anthropic says they want to test these blocks on a restricted model before rolling out Mythos Preview later. Opus 4.7 is the test run.

Security teams doing actual work like penetration testing can apply for Anthropic's new Cyber Verification Program to get access. For anyone else, you will probably never notice this block.

/7. Context Memory and Long-Running Agents

Opus 4.7 is better at handling file system memory than 4.6. Over long projects, it remembers your notes and brings them into new tasks, meaning you spend less time explaining the context every time you start a session. This helps a lot if you run agents for days.

It is a nice bump in quality, but 4.6 was already great at long-context work, so this is not a massive change.

Should you switch?

Upgrade to 4.7 if you do visual tasks, review designs, or care about image details. That improvement alone makes the switch worth it. The same goes if you create professional documents or financial models, since 4.7 cuts down the time you spend fixing the text.

Stay on 4.6 if you have a massive library of perfectly tuned prompts, if you need Claude for security testing, or if a 10 to 35 percent cost increase breaks your budget. These are not minor details; they will actually impact your work.

For heavy coding and writing, 4.7 is the better tool. Just test it on your actual tasks, watch your token count, and prepare to rewrite your prompts. Anthropic has a specific migration guide, and you should read it before moving anything important.