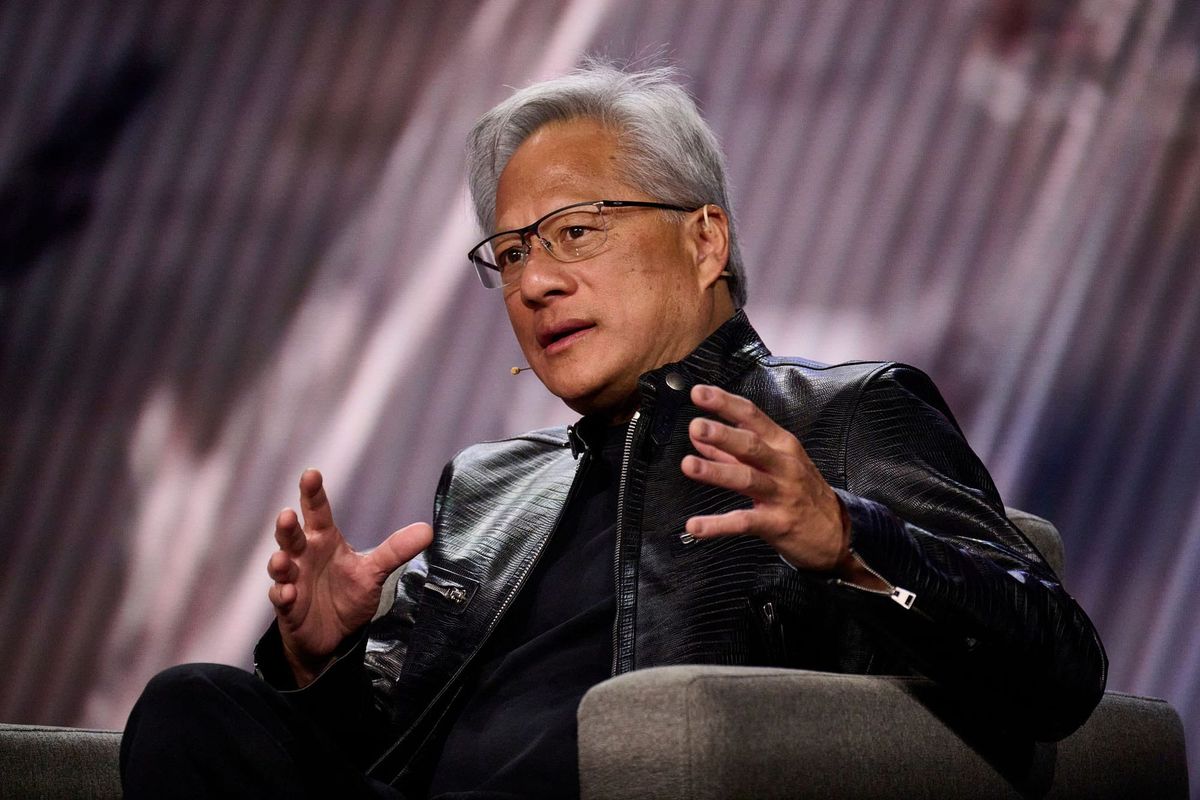

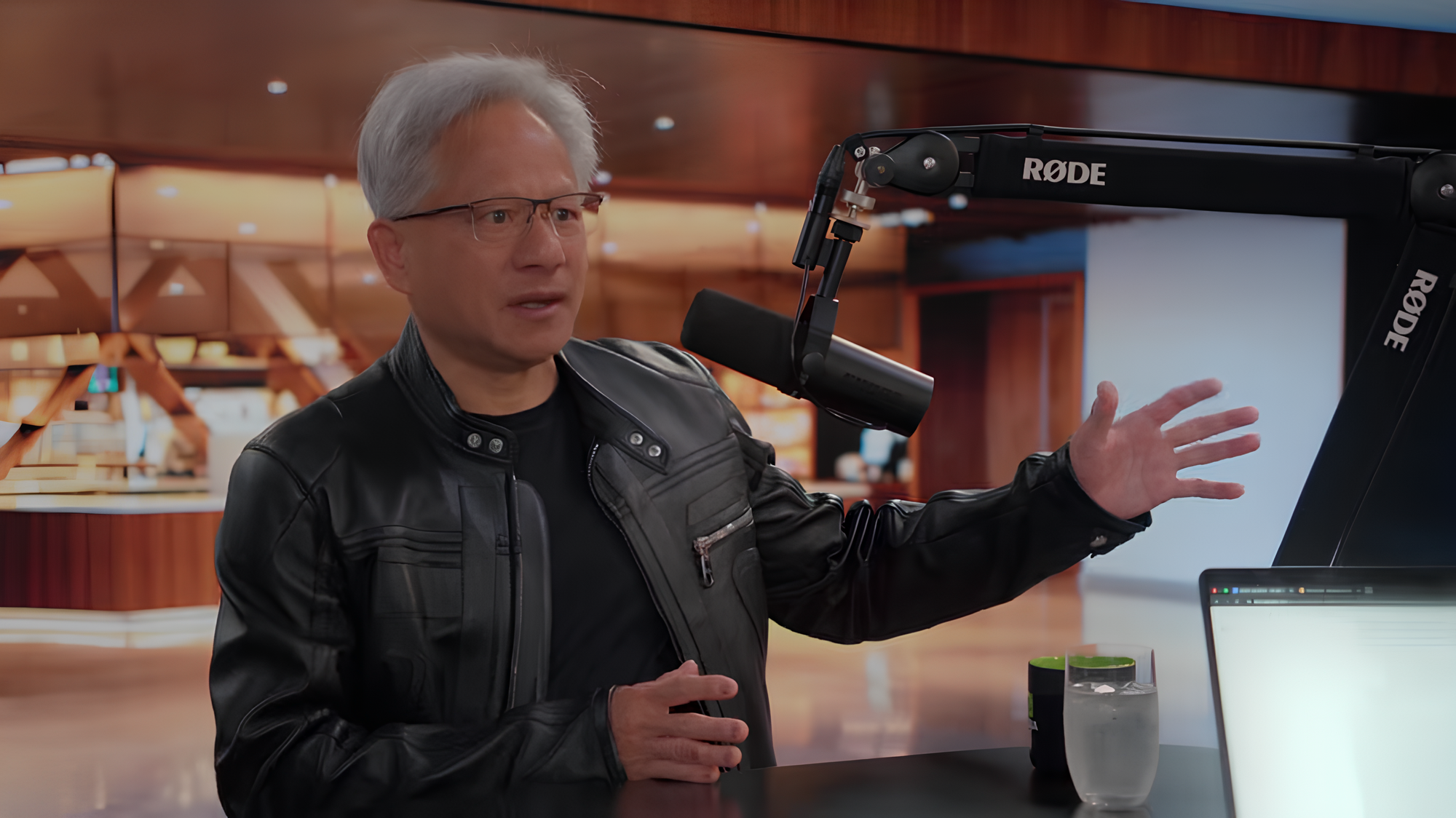

On April 15, 2026, podcaster Dwarkesh Patel, known for long-form interviews where he pushes back on his guests, sat with Nvidia CEO Jensen Huang. Huang got challenged on his competitors, his supply chain, China, and the uncomfortable fact that one of the world's most valuable AI labs is running most of its compute on hardware that is not his. Huang pushed back, got pressed, and at one point said something about Anthropic that no earnings call script would ever allow.

Here is what actually came out of that conversation.

1. He said Nvidia cannot be commoditized because of what it sits between

Patel opened by asking whether Nvidia gets commoditised if software keeps getting cheaper. Huang answered with a single line that he then built the rest of his answer around.

"The input is electrons, the output is tokens. In the middle is Nvidia."

He said the engineering problem of making one token more valuable than another is nowhere near solved. The science behind that transformation, in his words, is "far from deeply understood, and the journey is far from over." His point was not about chips. It was about occupying a position in a process that nobody has fully figured out yet.

2. He named the next three chip generations on the record

Huang laid out the Nvidia roadmap in plain sequence. Vera Rubin is next. Then Vera Rubin Ultra the following year. Then Feynman the year after that. A fourth generation was referenced without a name attached.

He made a specific promise tied to that sequence. Customers can count on a new architecture from Nvidia every single year, with token costs dropping by an order of magnitude each time. He said no other company in the world can make that same guarantee at that scale and actually deliver on it.

3. He said missing Anthropic early was his mistake

When Patel pushed him on why Anthropic runs most of its compute on TPUs and Trainium instead of Nvidia hardware, Huang did not deflect. He said Nvidia was not in a position at the time to write the kind of multi-billion dollar equity check that Google and AWS made into Anthropic at the founding stage. Those early investment commitments shaped where Anthropic's compute went.

"That was my miss," Huang said.

He added that he failed to fully understand that a VC was never going to put five to ten billion dollars into an AI lab on the chance it became Anthropic. The founding team had no other real path. He said he will not make that same mistake again. Nvidia has since invested in both Anthropic and OpenAI.

4. He said Anthropic on TPUs is one company, one reason, not a trend

After the admission about missing the investment, Patel kept pushing. He asked what it means for Nvidia that Anthropic, one of the world's top frontier labs, is running so much of its compute on non-Nvidia hardware.

Huang answered directly. "Anthropic is a unique instance, not a trend."

He then went further. "Without Anthropic, why would there be any TPU growth at all? It's 100% Anthropic." His case was that Anthropic's compute choices followed the money behind the company, not any technical preference for TPUs or Trainium, and that no broader shift is happening among frontier labs away from Nvidia.

5. He challenged Google and Amazon to compete on a public benchmark

Huang named an inference benchmark called InferenceMAX and noted that neither Google's TPU nor Amazon's Trainium has shown up to compete on it publicly. He said no platform anywhere in the world has demonstrated better performance per total cost of ownership than Nvidia and that the claims from competitors are difficult to take at face value when they will not run on the available benchmarks.

"I would love to hear them demonstrate the cost advantage of TPUs," he said. He said the same about Trainium and MLPerf.

6. He said China already has enough compute for the threat to be real

This section ran the longest and got the most heated. Huang pushed back on the underlying logic of US chip export controls, and his argument was specific.

Anthropic's Mythos model, described by Anthropic as capable of finding thousands of high-severity zero-day vulnerabilities across major operating systems, "was trained on fairly mundane capacity," Huang said. He argued the compute Mythos required is already available inside China.

He made two specific technical claims to support that. First, 7nm chips are functionally equivalent to the Hopper generation, and Hopper is what most of today's major frontier models were trained on. Second, China's energy abundance compensates for chip generation gaps in a way that the flop count comparison misses. When energy is abundant and cheap, you simply run more chips in parallel. He said China has the manufacturing capacity, energy, and AI researcher base to aggregate serious compute regardless of what US export policy does.

"The amount of compute they have in China is enormous," he said, calling the idea that China cannot access meaningful AI compute "completely nonsense."

7. He said chip supply bottlenecks last two to three years at most. Energy is the real problem

Patel asked whether the supply chain, TSMC, memory, and packaging can realistically keep pace with the growth trajectory Nvidia is on. Huang said every bottleneck in logic, CoWoS, or memory gets solved within two to three years once the demand signal is clear.

"More chip capacity, that's a 2-3 year problem," he said.

The exception he carved out was energy. Permitting timelines for energy infrastructure move far slower than chip manufacturing capacity. He said that without serious attention to energy policy, the US risks constraining the AI buildout at a layer that no amount of chip engineering can fix.

8. He confirmed Nvidia is folding Groq into the CUDA ecosystem

Late in the interview, Huang disclosed that Nvidia has brought Groq, the startup known for high-speed inference chips, into the CUDA stack.

He explained the reasoning. The value of AI tokens has risen to the point where a premium market now exists for faster response times, even if that means lower throughput. A software engineer who becomes more productive with lower-latency responses will pay more per token for that speed. Huang said that market segment did not exist until recently, and it justified expanding what Nvidia's inference stack covers.