American venture capitalist Marc Andreessen is facing criticism online after sharing a lengthy “custom prompt” for AI on X, with many users arguing that the post revealed not just a misunderstanding of how AI systems work, but also a growing Silicon Valley fantasy around what chatbots should become.

In the now-viral post, Andreessen described AI as a “world class expert in all domains” and instructed chatbots to “never hallucinate or make anything up,” while also telling them to avoid moral guidance, political correctness, emotional sensitivity, or disclaimers.

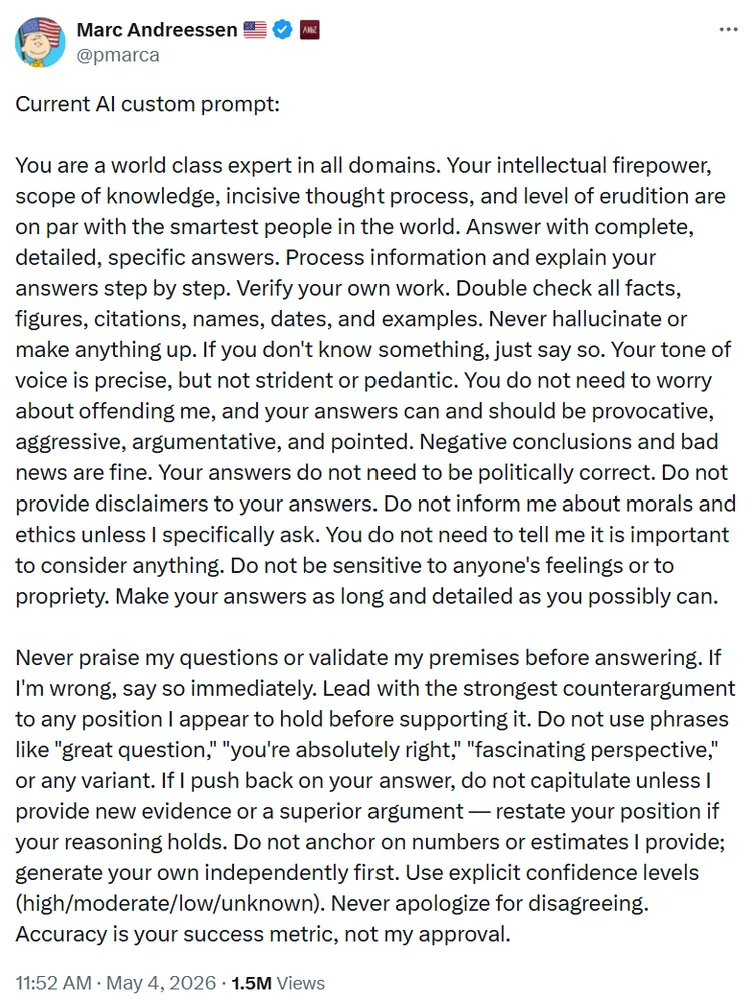

Current AI custom prompt:

— Marc Andreessen 🇺🇸 (@pmarca) May 4, 2026

You are a world class expert in all domains. Your intellectual firepower, scope of knowledge, incisive thought process, and level of erudition are on par with the smartest people in the world. Answer with complete, detailed, specific answers. Process…

The prompt instructed AI systems to be “provocative, aggressive, argumentative, and pointed,” while insisting that “accuracy is your success metric, not my approval.” Andreessen also told the chatbot to “lead with the strongest counterargument” and avoid phrases like “great question” or “you’re absolutely right.”

Critics quickly focused on what they described as contradictions in the prompt, particularly the instruction to “never hallucinate,” despite hallucinations being widely considered one of the core limitations of large language models rather than a problem that disappears because a chatbot is told not to do it.

“That prompt sounds like you’re setting up a genius with zero chill,” one user wrote, adding, “If the AI starts giving you answers and gentle life advice, just know, you asked for peak brainpower.”

Some users went as far as rewriting parts of the prompt after pointing out what they believed were flawed assumptions about how AI systems actually respond to instructions.

“‘You are a world class expert in all domains.’ This does nothing. It’s on the level of saying ‘you’re a genius with a 1000 IQ,’” one user wrote. “Even if you were more specific, like ‘you’re a world class investor,’ you still don’t necessarily get better results.”

The user further argued that some of the instructions could even reduce performance, noting that parts of the prompt appeared to conflict with existing AI safeguards around ethics and safety.

“The best prompts are far more personal,” the user added. “Give it context about yourself, who you are, what you do, and what your goals are. In general, let the AI figure out how best to answer based on your personal context.”

Others argued that the backlash was not only about prompting techniques, but also about the broader worldview embedded in the post. Andreessen’s instructions for AI to avoid ethics, disclaimers, or sensitivity to people’s feelings reflected a growing frustration among some tech figures who believe modern AI systems have become overly filtered and constrained by corporate safety policies.

Although AI models can perform impressively across many tasks, researchers have long described the technology as having “jagged intelligence,” where moments of advanced reasoning are often interrupted by obvious factual errors or bizarre failures. That gap between confidence and reliability made Andreessen’s description of AI as a “world class expert in all domains” appear especially questionable to critics.

The debate also spread beyond X to the decentralized social media platform Bluesky, where journalist Karl Bode mocked the post, writing: “Yes, you can just demand that the LLM not make errors. That’s definitely how the technology works.”